Evidence accumulation to determine effective climate change education (EwiK)

It is scientifically proven that human activities and actions contribute significantly to climate change (IPCC 2018; Cook 2013; Dietz 2021). The consequences for many natural and social systems can also now be determined with a high degree of certainty. Serious estimates suggest that we have less than twelve years to address the looming climate catastrophe and ensure, through immediate action, that global temperatures do not rise 2°C above pre-industrial levels (IPCC 2018; UNFCCC 2016).

As part of the necessary promotion of public awareness of climate change, education for sustainable development (ESD) and climate change education (CCE) are seen as playing a particularly important role (e.g. UNFCCC 2015).

However, ESD and CCE will only fulfil this role if it demonstrably develops an awareness of climate change, its consequences and possibilities for action among learners. This includes that learners understand the climate system, deal with climate change and can take adequate mitigation and adaptation measures. There are numerous proposals in the literature regarding the promotion of such goals, including content-related and methodological proposals (Wibeck 2014; Brownlee et al. 2013; González-Gaudiano & Meira-Cartea 2010).

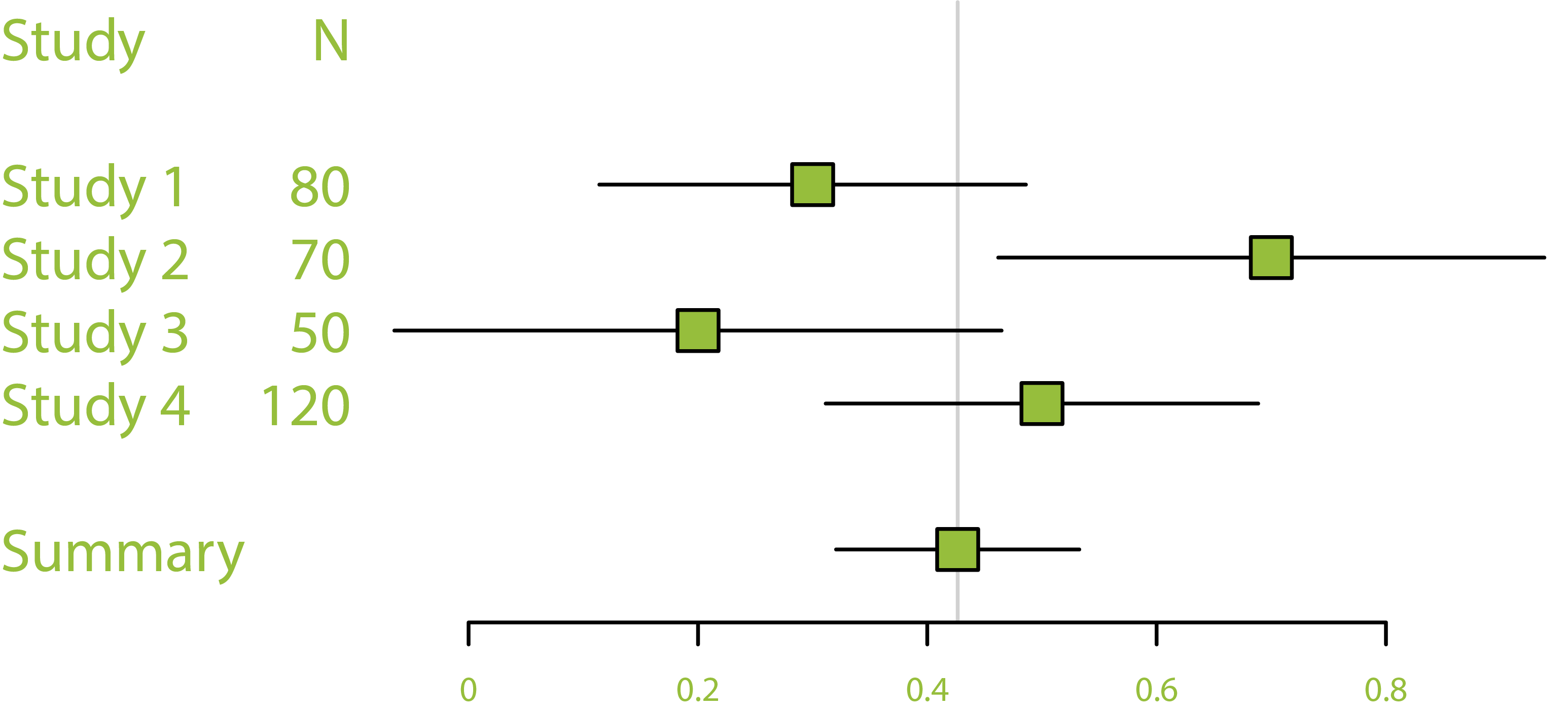

The question now arises as to which of these proposed methods, procedures, contents, etc. actually effectively promote the recommended goals. To answer this question, we will examine the effectiveness of existing intervention studies on CCE in EwiK. Using meta-analytical methods, on the one hand, an average effectiveness across all studies will be determined and, on the other hand, moderating variables will be identified that explain differences in the effectiveness of the studies. For this purpose, the typical task packages for the preparation of meta-analyses will be run through: 1) criteria-based literature search and selection, 2) coding of the studies, 3) extraction of the effect sizes of the individual studies, 4) analysis of moderator effects and 5) discussion and classification of the findings (Borenstein et al. 2010, Littell, et al. 2008; PRISMA).